Question #2cd38

1 Answer

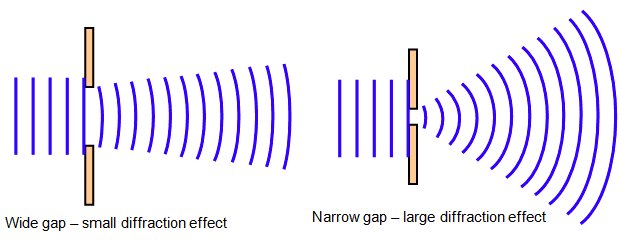

Diffraction is the tendency of light to spread out as it travels limiting the resolving power of an optical system.

Explanation:

Light can be thought of as a wave spreading from its source. The spreading from that source depends on the size of that source, or aperture, compared to the wavelength of the light itself. The smaller the aperture the more spreading we can expect.

Unless our aperture is infinite, we can expect there to be some amount of diffraction. But when you think about it, the size of most telescopes, microscopes or cameras is pretty big compared to the wavelength of the light. So why do we notice it? Usually we don't, like in a camera (most of the time).

The problem is that the effect is enhanced by the lenses used to focus the light. When we try to push the limits of the resolving power of the instrument to see really small things - for a microscope - or really far things - for a telescope - then we run into a limit of what the system can achieve.

This is why bigger is better for a telescope - giving us a bigger aperture to work with. It's also why we use shorter wavelengths when we want to look at really small things - like the electron microscope which uses electrons instead of light which have very short wavelengths indeed.

Advanced : When we describe light as particles, called photons, they have a wave-like property called the de Broglie wavelength. This is part of the wave-packet which describes the probability of detecting the particle in a particular position. Using the deBroglie wavelength the entire description above can be repeated quantum-mechanically yielding the same result!

In the case of light, the de Broglie wavelength is the wavelength of the light itself. But for electrons, this wavelength is much smaller making them suitable for microscopes that see things at very small scales. This is the reason for the existence of the electron microscope !